Generative AI is getting closer to being implemented in the real world. The big AI companies have already introduced AI agents who can do your web-based busywork, such as ordering groceries or making a dinner reservation. Today, Google DeepMind

Two generative AI models were announcedto power robots of tomorrow.

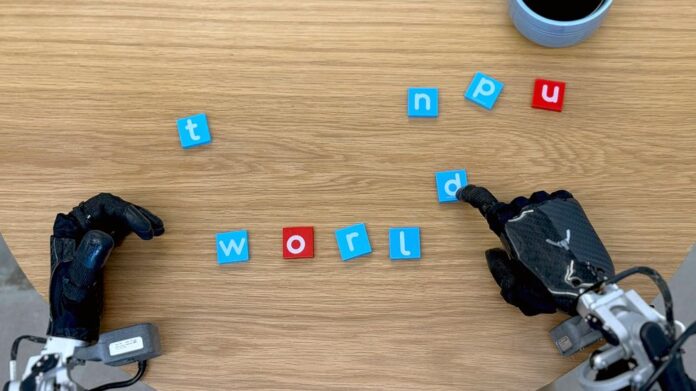

Both models are built on Google Gemini. This multimodal foundation model can process text and voice data, as well as image data, to answer questions, provide advice, and help in general. DeepMind calls the first of the new models, Gemini Robotics, an “advanced vision-language-action model,” meaning that it can take all those same inputs and then output instructions for a robot’s physical actions. The models can be used with any hardware system but were mainly tested on two-armed robots.

Aloha 2 system that DeepMind launched last year.

A voice in a demo video says: “Pick the basketball up and slam it” (at 2 minutes 27 seconds of the video below). Then, a robot arm carefully picked up a mini basketball and dropped it into a tiny net. While it wasn’t an NBA-level dunk it was enough to get DeepMind researchers excited.

Google DeepMind released a demo video demonstrating the capabilities of its Gemini Robotics Foundation model to control robots.

Gemini Robotics

This basketball example is my favorite, said Demo videos showed the robotic arms performing delicate tasks, such as folding a piece paper into an origami-style fox. It’s important to remember that the impressive performance is in the context of a narrow set high-quality data on which the robot was trained for these specific tasks. The level of dexterity represented by these tasks is not generalized. What is embodied reason?

Gemini Robotics -ER is the second model that was introduced today. The ER stands for “embodied reason,” which is a type of intuitive physical understanding that humans develop over time. DeepMind wants to emulate this cleverness by allowing Gemini Robotics -ER to make educated guesses about how to interact with an object that we have never seen before. Parada showed an example of Gemini Robotics’-ER’s ability in identifying an appropriate grasping area for picking up a cup. The model correctly identifies a handle because that’s the place where humans tend to grab coffee mugs. This illustrates the potential weakness of relying solely on human-centric data for training: For a robot, and especially one that can comfortably handle a hot coffee mug, a thin handle may be less reliable than a more enveloping grip of the mug. Vikas Sindhwani, DeepMind’s head of robotics safety for the project, said the team took a layering approach to safety. The team uses both physical safety controls, such as collision avoidance and stability management, and “semantic” safety systems that evaluate the instructions and consequences of following them. Sindhwani explains that these systems are most advanced in the Gemini Robotics ER model. It is “trained to assess whether or not a possible action is safe in a given situation.” DeepMind, however, is releasing a data set, and what they call the Asimov benchmark,

Kanishka Rao, the principal software developer for the project, gave a press conference. He says that although the robot “never saw anything related to basketball,” its foundation model understood the game in general, knew what a net looked like, and what “slam-dunk” meant. Rao says that the robot was able to “connect those [concepts] in order to actually accomplish the tasks in the physical world.” What are the advancements of Gemini Robotics (19659007)?

Carolina Parada, head of robotics for Google DeepMind said that the new models improved over the company’s previous robots in three dimensions. These were generalization, adaptability and dexterity. She said that these improvements are needed to create a “new generation of helpful robots.” The researchers examined visual generalization, instruction generalization, and action generalization. Parada says robots powered by Gemini are better able to adapt to changing circumstances and instructions. In a video, the researcher instructed a robot arm in a similar manner to a shyster to place a bunch plastic grapes in a clear Tupperware jar, and then proceeded with moving three containers around the table.

Shell gameThe robot arm followed the clear container around until he could complete his directive.

Gemini Robotics adapts better to changing instructions and situations than previous models. Google DeepMindDeepMind’s Approach to Robotic Safety.

In December, DeepMind announced a partnership with the humanoid-robotics company Apptronik.