Breaking Barriers: Accessible 3D Modeling for the Visually Impaired

Traditional 3D design platforms heavily rely on visual interactions such as dragging and rotating objects, which creates significant obstacles for individuals who are blind or have low vision. This limitation restricts their participation in fields like hardware design, robotics, engineering, and coding, where visual modeling is essential. Although visually impaired programmers can develop sophisticated code, the absence of accessible 3D modeling tools prevents them from independently designing, visualizing, and validating both physical and virtual components of their projects.

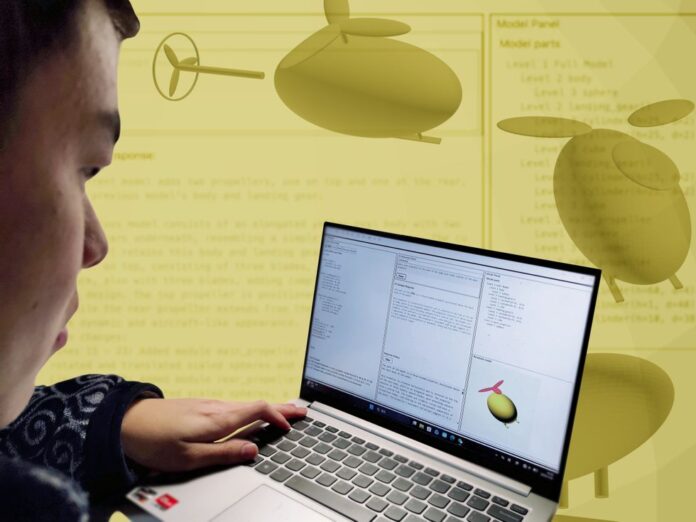

Innovative Solutions: Introducing A11yShape

Recent advancements are reshaping this landscape. A pioneering tool named A11yShape is designed to bridge the accessibility gap in 3D modeling. While existing text-based modeling software like OpenSCAD allows users to script 3D objects, and emerging AI-driven platforms can generate 3D code from natural language prompts, these still require sighted assistance to interpret visual outputs. A11yShape revolutionizes this by enabling blind and low-vision programmers to independently create, inspect, and refine 3D models without relying on visual feedback from others.

How A11yShape Enhances Accessibility

A11yShape achieves this independence by producing detailed, accessible descriptions of models, structuring designs into a clear semantic hierarchy, and ensuring full compatibility with screen readers. This approach allows users to understand and manipulate their models through auditory and textual feedback rather than visual cues.

From Concept to Creation: The Origin of A11yShape

The project was inspired when Liang He, an assistant professor of computer science at the University of Texas at Dallas, engaged with a low-vision peer studying 3D modeling. Observing the challenges faced, He sought to transform the coding techniques taught in a specialized 3D modeling course for blind programmers at the University of Washington into a practical, user-friendly tool. “My goal was to develop something genuinely useful for this community, not just a theoretical concept,” He explains.

Revolutionizing 3D Design with Script-Based Modeling

A11yShape is built to work seamlessly with OpenSCAD, a script-driven 3D modeling environment that eliminates the need for mouse-based interactions. Unlike conventional graphical interfaces, which are often inaccessible to visually impaired users, OpenSCAD allows model creation entirely through code.

Integrated AI Support for Real-Time Assistance

One of A11yShape’s standout features is its AI Assistance Panel, which connects users to ChatGPT-4o. This integration enables programmers to ask questions, validate design choices, and debug OpenSCAD scripts in real time, enhancing productivity and reducing frustration.

A11yShape synchronizes three key panels-code editor, AI-generated descriptions, and model structure-allowing users to track how code modifications impact their designs without visual input.

When a user selects a code snippet or model element, A11yShape highlights the corresponding parts across all panels and updates the descriptive narration, ensuring users maintain full awareness of their current focus.

Insights from User Testing and Feedback

The development team conducted usability studies with four participants possessing diverse visual impairments and programming experience. Observations revealed that even novices could create simple 3D structures using A11yShape, with one participant noting it “opened new avenues for the blind and low-vision community to engage in 3D modeling.”

However, users also highlighted challenges, such as the difficulty of comprehending complex shapes through lengthy textual descriptions alone. Many expressed the need for tactile feedback-via physical models or haptic devices-to fully conceptualize their designs.

To assess the reliability of AI-generated descriptions, 15 sighted evaluators rated them highly on geometric accuracy, clarity, and avoidance of hallucinations, with average scores ranging from 4.1 to 5 on a 5-point scale. This suggests the AI’s outputs are dependable for everyday modeling tasks.

A11yShape’s assistive technology empowers visually impaired programmers to verify and refine their 3D designs independently.

Future Directions: Enhancing Multimodal Accessibility

Building on user feedback, the team plans to incorporate tactile interfaces, real-time 3D printing capabilities, and more succinct AI-generated audio summaries to further improve the user experience. These enhancements aim to provide a richer, multisensory understanding of 3D models.

Broader Impact: Lowering Barriers for Aspiring Programmers

Beyond professional applications, A11yShape serves as a gateway for blind and low-vision individuals entering the world of computer programming and digital fabrication. Stephanie Ludi, director of the DiscoverABILITY Lab and professor at the University of North Texas, emphasizes the importance of creative expression through technology. “People enjoy creating for both practical and recreational purposes. Individuals with visual impairments share this passion, and tools like A11yShape are vital for fostering inclusivity within the maker community,” she states.

The A11yShape project was showcased at the ASSETS conference in Denver, highlighting its potential to transform accessibility in 3D design.