Revolutionizing Robotics and Autonomous Vehicles with Nvidia’s Latest Innovations

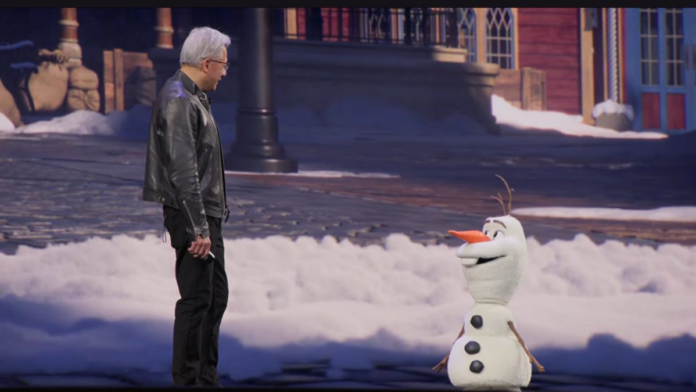

At the recent Nvidia GTC event, CEO Jensen Huang unveiled groundbreaking advancements in physical AI-artificial intelligence integrated into machines that operate in the real world, such as robots and autonomous vehicles. The highlight was a demonstration featuring a lifelike robot modeled after Olaf, the beloved snowman from Disney’s Frozen, powered by Nvidia’s Jetson platform and trained within the Omniverse simulation environment. Although the interaction was somewhat stilted, it showcased the potential for future robotic characters to roam theme parks like Disneyland, bringing immersive experiences to life through Nvidia’s cutting-edge technology.

Emerging Foundation Models for Enhanced Physical AI

Nvidia introduced several new foundational AI models designed to elevate the capabilities of robots and self-driving cars. Among these are:

- Cosmos 3: A synthetic world generator that creates complex virtual environments, enabling physical AI systems to better understand and navigate real-world scenarios.

- Isaac GR00T N1.7: An open reasoning vision-language-action (VLA) model tailored for humanoid robots, optimized for commercial deployment in diverse applications.

- Alpamayo 1.5: An advanced VLA model that enhances autonomous vehicle navigation by integrating driving video, motion history, and natural language prompts to produce precise driving trajectories.

Alpamayo 1.5 represents a significant leap forward in autonomous driving technology, enabling vehicles to adapt to unpredictable road conditions, weather changes, and pedestrian movements with greater safety and reliability. Currently, Nvidia’s partners are leveraging Cosmos 3 for training physical AI and deploying Isaac GR00T N1.7 to scale humanoid robot applications.

Expanding the Autonomous Vehicle Ecosystem

Jensen Huang emphasized that the era of self-driving cars powered by AI has truly arrived. Nvidia is deepening its collaboration with Uber, planning to deploy a fleet of robotaxis equipped with Nvidia’s Drive AV software across 28 cities worldwide by 2028, with early launches in Los Angeles and San Francisco slated for 2027. This expansion will allow Uber users to access autonomous rides on a much broader scale.

Additionally, Nvidia is partnering with major automakers such as BYD, Hyundai, Nissan, and Geely, joining existing collaborators like GM, Mercedes, and Toyota. These companies utilize Nvidia’s Drive Hyperion platform and Alpamayo models to develop Level 4 autonomous vehicles-cars capable of fully automated driving without human intervention under most conditions.

Advancing Edge AI and Space-Based Computing

Nvidia is also pioneering edge AI solutions by collaborating with T-Mobile and Nokia to deploy AI radio access network (AI-RAN) infrastructure in remote and underserved areas. This approach transforms 5G networks into distributed AI computing platforms, enabling faster data processing with minimal latency-crucial for real-time physical AI applications such as traffic management and utility maintenance.

In the realm of space technology, Nvidia introduced platforms like Vera Rubin, IGX Thor™, and Jetson Orin™, designed to bring AI computing capabilities to orbital data centers and autonomous space operations. These innovations aim to process data locally in space, reducing reliance on Earth-based cloud systems and enabling intelligent decision-making for satellite constellations and deep-space exploration. While some components like Vera Rubin Space-1 are forthcoming, the groundwork for AI-powered space computing is rapidly taking shape.

Introducing the Physical AI Data Factory Blueprint

Recognizing the critical importance of high-quality training data for physical AI, Nvidia launched the Physical AI Data Factory Blueprint-an open-source reference architecture that streamlines the generation, augmentation, and evaluation of training datasets. Scheduled for release on GitHub next month, this framework leverages Nvidia’s Cosmos models to produce synthetic data at scale, including rare edge cases that are difficult to capture in real-world environments.

This comprehensive data pipeline supports reinforcement learning and rigorous testing for autonomous vehicles and robotics, reducing development costs and accelerating deployment timelines. Early adopters like Uber and Skild AI are already utilizing the Blueprint to enhance their autonomous systems.

Implications for Industry and Everyday Life

The advancements in physical AI herald transformative changes across multiple sectors. Beyond consumer-facing applications such as autonomous ride-hailing and household robots, these technologies promise to revolutionize industrial operations, urban infrastructure, and entertainment venues. More intelligent, autonomous machines will increasingly operate on our roads, in factories, and even within theme parks, reshaping how we interact with technology in daily life.

Keywords:

Physical AI, Nvidia, autonomous vehicles, robotaxi, edge AI, AI-RAN, synthetic data, autonomous robots, AI computing, space computing